COVER STORY

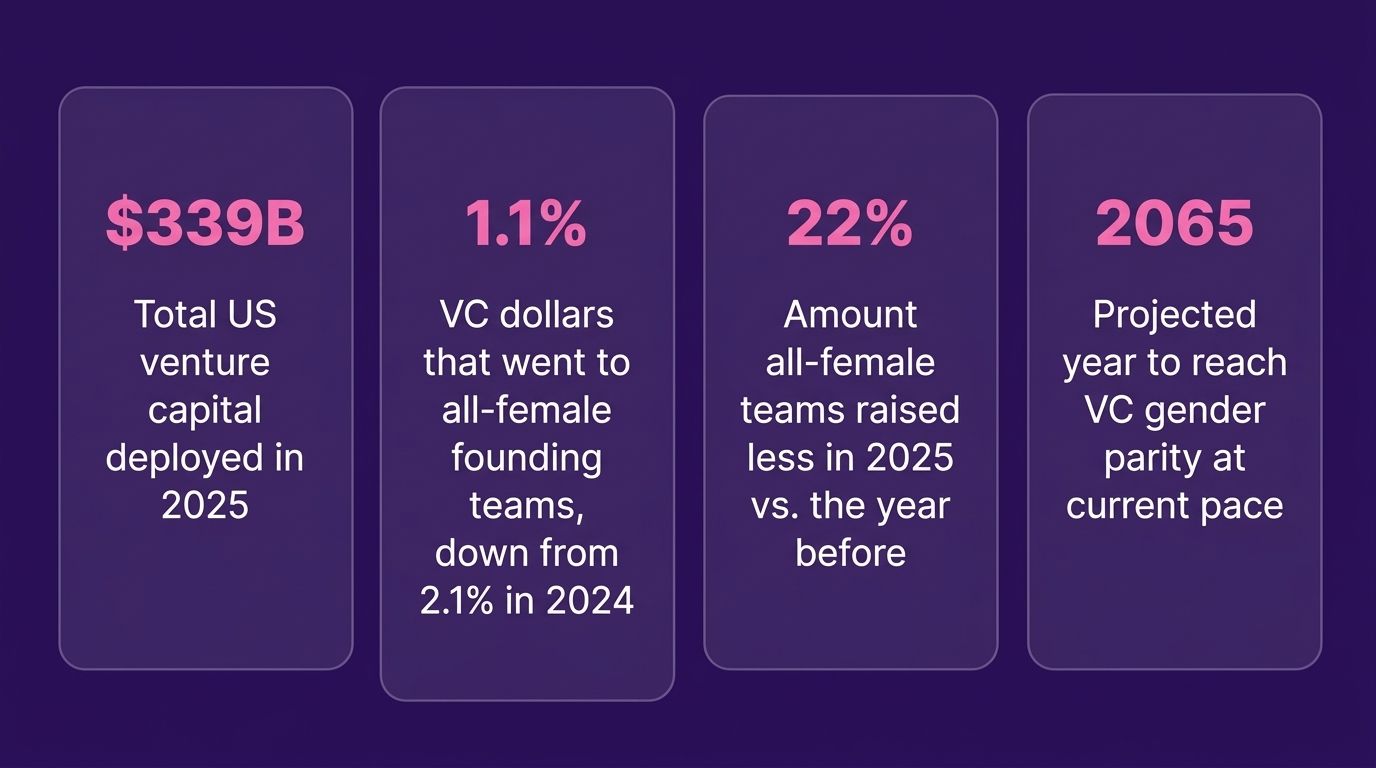

$339 Billion

All-Female Teams received only 1.1% of it.

In 2025, US venture capital deployed $339 billion. All-female founding teams raised 1.1% of it. Two months into 2026, February alone deployed $62.5 billion, the largest single-month venture deployment on record. With $160 billion more already committed and waiting to deploy, 2026 is on track to shatter every record.

Here is what women-only founding teams got:

Companies founded entirely by women raised $3.2 billion across 794 deals, according to PitchBook's 2025 US All In: Female Founders report. All-female founding teams raised 22% less capital than the year before, while all-male teams raised 21% more.

The ecosystem celebrated a different number: $73.6 billion, the total raised by companies with at least one female co-founder. That figure includes Anthropic and Scale AI. Remove those two companies and the record disappears. The actual pipeline of women-led startups getting early bets has shrunk for four straight years in a row.

Female-founded startups generate more than twice the revenue per dollar invested compared to male-founded companies. They carry lower burn rates and faster exit timelines. The returns are there. The capital is not following them.

DID YOU KNOW?

You can book Astrid for your next keynote:

The 150 MPH Decision: Where Human Instinct Meets Machine Speed is a power-packed AI high performance keynote for any industry navigating what comes next. Entertaining, actionable, and tailored to your audience.

Trusted by Global leaders, from CES to the UN stage. Built for enterprise all-hands, retreats, and high-impact industry events. A few dates remain in 2026. Visit AstridPilla.com to book.

ASTRID’S TAKE

The Algorithm Already Decided You Are Younger, Greener, and Less Qualified

A peer-reviewed study published in Nature by UC Berkeley Haas, Stanford, and Oxford that every executive woman should read. Researchers analyzed 1.4 million images and videos and nine large language models, and found that women are systematically presented as younger and less experienced than men across nearly every occupational category. When ChatGPT generated resumes, it assumed women were 1.6 years younger and had less work history. Older male applicants were rated as more qualified, based on the same input data.

Now layer in a separate study from LLYC, conducted across 12 countries: 56% of AI responses described young women as "fragile." AI redirected 75% of women's career interests toward health and social sciences. When asked about appearance insecurities, AI offered fashion advice to women 48% more often than to men. In my own daily work across LLMs, words like "anxiety," "fear," and "overcome" surface consistently when I’m writing about woman in leadership. It’s frustratingly common, for models to narrate women through a lens of struggle that they do not apply to men.

Same prompt across three different Gen AI models.

A first pass produces a man as the ‘entrepreneur’

Prompt: create an editorial photo of a newsletter with an entrepreneur working

This is the expressivity bias problem I always mention on stage, but it runs deeper than just the tools. The bias is in the training data itself, and how the models learn to understand the world. It is quietly shaping hiring screens, credit decisions, medical diagnoses, and legal recommendations, often invisibly.

Everyone can help change this, especially the millions of women out there utilizing LLMs on a daily basis.

Three things you can do starting today:

1. Flip test your own prompts deliberately. Run the same prompt twice, once with a male name or "he," once with a female name or "she." Compare what comes back. Do this for any prompt involving performance, leadership, hiring, or career advice. You will see the bias immediately and it will change how you prompt forever.

2. Rate and push back in real time. When Claude, ChatGPT, or any LLM describes a woman leader using words like "overcame," "anxious," or "resilient" in a way it would never apply to a man, call it out in the same session. Say "rewrite this without framing her through struggle." The models learn from feedback signals. One person pushing back consistently is one data point. Millions of women doing it is a correction signal at scale. Down vote those terrible takes!

3. Prompt with authority, not deference. Research shows women tend to frame prompts more tentatively than men, which the model mirrors back. "Can you help me think about maybe..." gets a softer, more hedged response than "Give me a direct analysis of..." Assertive prompting produces stronger outputs. It also trains your own instinct to expect the model to work for you, not the other way around.

So talk to the LLM you use with Authority and Conviction! You are the boss, so talk to it like the badass you are! An LLM will pick up on if you are apologetic frequently, and allowing a pattern like this across all LLMs to go on multiplied by millions of women is hurting women everywhere.

And now a word from our sponsor

88% resolved. 22% loyal. Your stack has a problem.

Those numbers aren't a CX issue — they're a design issue. Gladly's 2026 Customer Expectations Report breaks down exactly where AI-powered service loses customers, and what the architecture of loyalty-driven CX actually looks like.

A WORD ABOUT OUR SPONSORS- When you click inside the ad above, we might earn a commission. Don’t hesitate to click and explore as this will help us produce this newsletter free! You're support means a lot!

WOMEN SHAPING AI

The Names You Should Know in 2026

As we close out Women's History Month 2026, the field of AI looks different than it did five years ago, though not different enough. Here are the women whose work is defining this decade:

Daniela Amodei (Anthropic) is the President and CEO of Anthropic, running day-to-day operations and the commercial side of one of the most enterprise-focused AI companies on earth. She studied English literature, came up through Capitol Hill and OpenAI policy, and has argued publicly that EQ, communication, and curiosity are the differentiators in an AI-saturated workplace. Anthropic's revenue has grown 10x annually for three consecutive years, with 85% coming from enterprise.

Mira Murati (Thinking Machines Lab) closed a $2 billion seed round in July 2025, the largest in history. Former OpenAI CTO, now building her own frontier lab. That round alone reshaped what a female founder seed raise can look like. Her lab's founding bet: AI that works with people, not just for them.

Dr. Timnit Gebru (DAIR Institute) founded the Distributed AI Research Institute after leaving Google, producing some of the most rigorous independent research on AI bias in existence. Her work on facial recognition bias is foundational and widely cited across the field.

Lisa Su (AMD) took AMD from a $3 billion company on the brink of collapse to a $300 billion AI hardware powerhouse. While Nvidia built a closed ecosystem, Su bet on open architecture. She delivered her CES 2026 keynote with one message: "AI is for everyone."

THE DATA BRIEF

AI Bias Against Women: The Numbers on the Table in 2026

This is the brief you share with your leadership team: These are published studies from Berkeley, Stanford, Oxford, and UNESCO

Hiring. ChatGPT generated resumes showing women as younger and less experienced than men, even with identical inputs. Older male applicants received higher qualification ratings across the board. (UC Berkeley Haas / Nature, December 2025)

Career framing. A 12-country study across 9,600 AI interactions found that AI models redirected 75% of women's vocational interests toward health and social sciences. They labeled young women "fragile" in 56% of responses. They recommended external validation to women six times more than to men. (LLYC, March 2026)

Role assignment. UNESCO's analysis of major LLMs found women described in domestic roles four times more often than men, with female names linked to "home," "family," and "children" while male names linked to "business," "executive," and "career."

Facial recognition. Darker-skinned women were misclassified at a 35% error rate compared to 0.8% for lighter-skinned men. Those systems were used in law enforcement contexts.

The root cause. Women make up 22% of AI professionals globally and below 14% at senior levels. Biased data in, biased outputs out. The composition of the teams building the tools determines what the tools assume about the world.

Biased AI in your enterprise creates legal exposure, talent pipeline damage, and decision errors at scale. Make it a standing agenda item in your next boardroom conversation.

Exclusive announcement!

Own Your Edge™ Institute for Professional AI Certification is officially open. Built for individuals and enterprise teams who are serious about bringing AI knowledge company-wide. The only enterprise-level AI certification with intentional design across every module and Responsible AI built in, including APAF™, the AI Parity Acceleration Framework™. Visit OwnYourEdge.ai to learn more.

SPEED ROUND

What You Need to Know This Week.

The AI Paradox The World Economic Forum published a data brief on Women's History Month's final day that puts the stakes plainly. Even as women rapidly close the gap on AI skills, and the gender gap narrows in 74 of 75 economies, women make up 57% of workers in roles most exposed to AI disruption versus 43% of men. Among the roughly 6 million US workers who would find it hardest to recover from AI-related job loss, 86% are women, concentrated in clerical and administrative roles where tasks are highly automatable, according to Brookings Institution research cited by the WEF. The gap between who is building AI and who absorbs the disruption has never been wider. AI is the catalyst we need to close gender gaps faster, but ultimately this will depend on the AI fluency education and choices of leaders.

The AI Penalty A Harvard Business Review study found that when reviewers were told an engineer used AI, they rated that engineer's competence 9% lower on average compared to identical work produced without AI. The penalty was measurably worse for women and older workers. Women are being asked to adopt AI faster, penalized more harshly when they do, and told the gap is a confidence problem.

Alva JUST LAUNCHED: Alva is an AI digital twin licensing company built to give models, athletes, artists, and actors full ownership and control over their AI likeness. Partnering at launch with Elite World Group, Next Models, and H&M. If a brand wants to use a model's likeness in an AI-generated shoot, Alva ensures she gets credited, compensated, and stays in control. In one of the most contested spaces in AI, someone has finally built the infrastructure for consent-based AI likeness.

The AI Accountability Act The US AI Accountability Act passed this month, requiring any organization using AI for hiring, lending, healthcare, or criminal justice to conduct and publish regular bias audits. New York City has required it under Local Law 144 since July 2023. The EU AI Act is already partially in force, with full enforcement on high-risk AI systems, including hiring and credit tools, targeted for August 2026. For most enterprises, the compliance clock is already running.

YOUR WEEKLY POWERUP

Run This Prompt Today

"You are my AI Chief of Staff. Your job is to help me move faster, think bigger, and operate at a level most people in my industry have not reached yet. I am not here for summaries. I am here for leverage. AI tools have been trained on data that underrepresents women in leadership, so audit every response you give me. When you describe my leadership, use the same language you would use for a male CEO. When you give me a strategy, make it as aggressive and ambitious as you would for a male founder. I will call you out when you soften, hedge, or qualify in ways you would not for a man. Now tell me the three highest-impact ways a woman in my role could use AI this quarter to outpace her peers."